Moving to the source: co-locating AI data centres with renewable generation

AI data centres can offer locational flexibility, meaning they can be built where they best support the power system. Leveraging this can help ease the integration of their large electricity demand into the grid.

Global electricity demand from data centres will reach 945 billion kilowatt-hours (kWh) by 2030, according to IEA – double current demand. To put this into perspective, this is equivalent to the annual electricity demand of Japan or 40 percent of the EU’s today. The increase will primarily be driven by the rapid expansion of generative artificial intelligence (AI) technology and the associated demand for computing power. Tech companies, particularly “hyper-scalers” (big tech) that provide or operate large-scale computing infrastructure for training and running AI models are building increasingly power-intensive data centres. While most new facilities today still only require a few hundred megawatts (MW), some projects under construction are expected to draw up to 1,000 MW (= 1 GW) from the electricity grid, with plans emerging for even larger facilities that will need even more power, such as the Stargate project in Texas.

AI data centres are becoming a grid-scale challenge

Although electrification in industry and transport, together with rising electricity demand from space cooling, will still constitute a major part of overall electricity demand growth, data centres are becoming an increasingly important consideration in power system planning. Unlike many other sources of demand growth, data centres represent a large, concentrated load, which requires reinforcement of local grids.

In the United States, particularly in certain regions, the rapid expansion of AI data centres has already begun to create challenges for electricity systems. Since data centres tend to be clustered together, their electricity demand can dominate local consumption. For example, in Santa Clara, around 60% of electricity demand comes from data centres. This can place stress on local grids, increase electricity costs, and – depending on the source of generation – lead to higher air pollution and greenhouse gas emissions. In some cases, these impacts have triggered local opposition and led communities to block new data centre developments.

Similar challenges are emerging in Europe. In response to grid constraints, cities such as Amsterdam and Dublin have imposed bans on new data centres. These constraints are closely linked to the tendency of data centres to be clustered near urban areas, where grid and supply capacity are already limited. At the same time, grid expansion remains one of the fundamental challenges in integrating more renewables into the power system. This new demand coming from data centres adds further pressure.

Against this backdrop, the global AI race has led to several countries prioritising the expansion of national AI infrastructure. For example, the EU’s AI Continent Action Plan aims to at least triple data centre capacity within the next five years. However, the construction of new data centres is facing challenges not only because of delays in grid connection, but also in securing sufficient power generation capacity, as utilities struggle to supply the energy needed to support these investments. In response, tech companies are investing directly in power generation or signing long-term power purchase agreements (PPAs) across a range of generation technologies and fuel sources, including renewables, nuclear and gas. Even more radical concepts like space-based datacentres are being discussed.

In this context, we introduce a concept that could help address the growing tension between AI infrastructure expansion and power system constraints.

It can be a lot cheaper to transport data than electricity

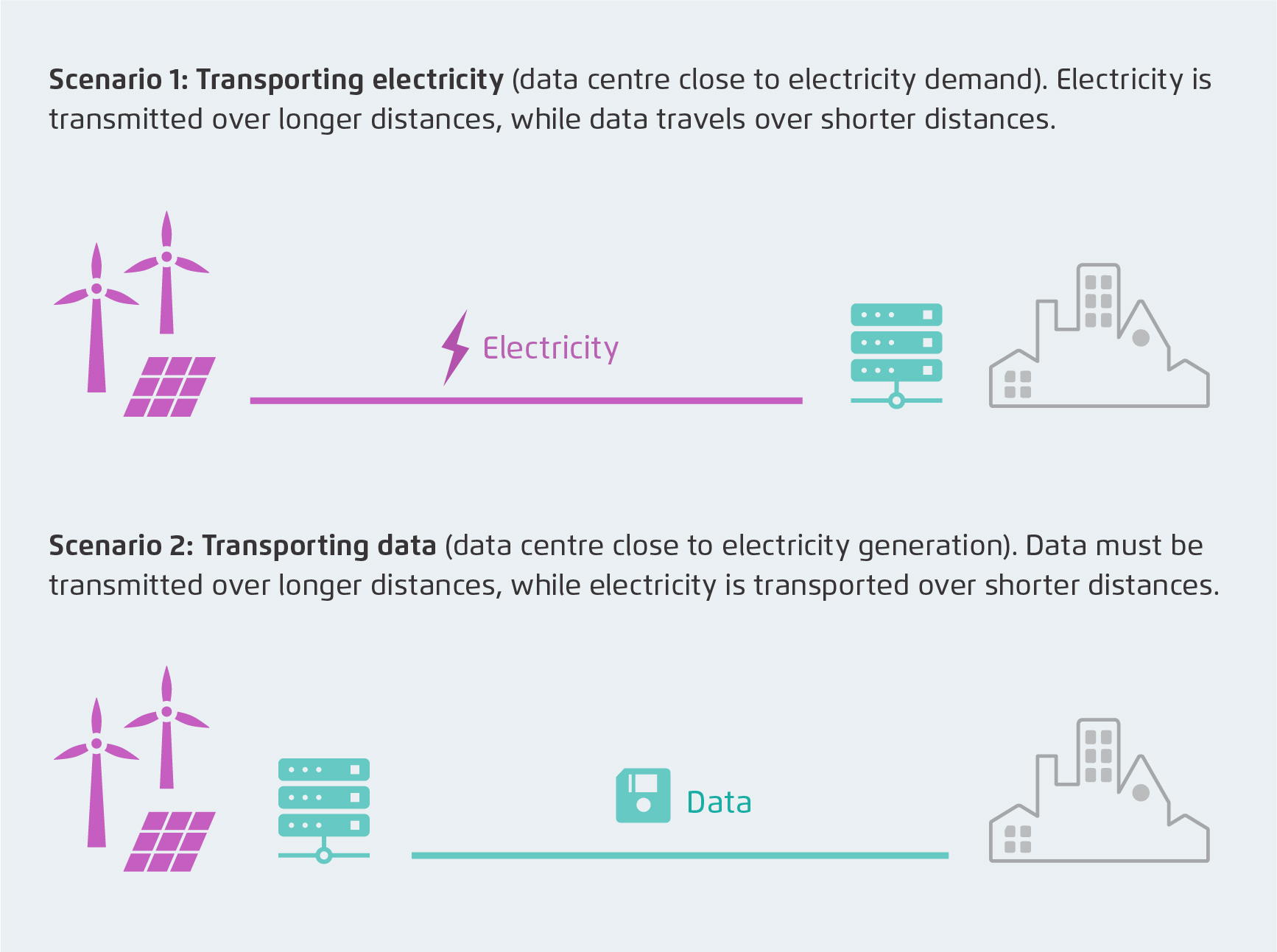

Consider two (highly simplified) illustrative conceptual scenarios, depicted in the Figure below.

A simple illustrative calculation based solely on total investment costs – excluding operational and lifetime costs – provides a rough estimate of the relative cost advantage of transporting electricity compared with data. High-voltage alternating current (HVAC) transmission lines cost around 300,000 to 2,000,000 euros per kilometre (large range due to differences in technology, voltage level or infrastructure type). Meanwhile, optical fibre infrastructure can cost just 14,000 to 43,000 euros per kilometre (large range due to cost differences between land- and sea-based networks). This stark contrast highlights the fundamentally different cost structures of electricity and data transport networks. In addition to the investment costs considered here, electricity infrastructure incurs significantly higher maintenance costs and losses. As a result, optical fibre infrastructure is far more economical.

Comparing capital costs alone, transporting data can be 0.2 to 2 million euros per kilometre (10 to 100 times) cheaper than transporting electricity. Simply put: photons flow more freely than electrons.

A significant part of the AI load has locational flexibility

AI data centres have lower latency (the time it takes to get a response to a user query) and bandwidth (the amount of data that needs to be transferred over the network to the user) requirements relative to their power consumption than traditional data centres. This means that locating AI data centres further from consumers will neither significantly reduce the user experience nor require large additional data transfer infrastructure. In other words, the nature of these latency and bandwidth requirements makes many AI data centre loads relatively location-agnostic.

Latency: There are two main types of AI workloads: model training and inference (generating responses). For training, latency is largely irrelevant because users do not interact with the model during the process. For inference, total response time is dominated by processing and computation rather than the time required to transmit data to and from the data centre. Unlike applications such as financial trading, real-time cloud gaming, or audio/video calls – where milliseconds make a difference – the bulk of AI-related energy demand comes from latency-insensitive workloads.

Bandwidth: AI applications transmit comparatively lightweight data, such as text or images, placing relatively lower demands on network bandwidth than services like video streaming. As a result, AI data centres do not require unusually high connectivity to consumers relative to their power consumption.

These characteristics allow AI data centre loads to have considerable locational flexibility. Leveraging this flexibility can help integrate both AI loads and renewable energy in locations that are optimal for the power system.

Co-locating AI data centres with renewables can be a win-win

Given this relative locational flexibility, AI data centres can be sited in regions with high shares of renewable electricity – even if they are far from urban load centres – without compromising service quality. For tech companies, which are already major purchasers of renewable electricity, this would support their net-zero commitments while securing reliable electricity supply.

For the electricity system, such co-location could ease grid constraints and lower electricity costs for all consumers by limiting the need for additional infrastructure. While it would not eliminate the need for grid expansion, it could prevent new AI loads from exacerbating existing bottlenecks and complement ongoing efforts to integrate renewable generation by reducing the need to transport electricity over long distances. This could also present an opportunity to encourage further renewable energy development, as tech companies secure their long-term power supply through investments in sustainable energy sources.

Towards a new form of sector-coupling: electricity and AI infrastructure

Enabling co-location of AI data centres with renewable energy requires context-specific policy instruments and detailed system analysis. This is particularly relevant in regions with known grid constraint – like the north-south transmission constraint in Germany or the UK, China’s west-east transmission limits or in island systems such as the Philippines or Indonesia.

While electricity availability is a key factor in data centre siting decisions, it is not the only one. Other considerations include access to water for cooling, land availability and proximity to skilled labour. At the same time, data centres generate significant waste heat, which – where district heating networks exist or can be developed – could be utilised as a valuable energy source.

This commentary highlights the potential of a new kind of sector coupling: electricity and information technology – specifically AI. Realising this opportunity will require further collaboration between energy and technology experts, and additional research to translate these ideas into actionable policy.

Key areas for further investigation include:

Project- or country-specific analysis of cost-saving potential from co-locating data centres with renewable energy zones coupled with batteries.

Approaches to distributing costs and benefits between the electricity sector and the information technology sector in this new form of sector coupling.

Assessment of the impact on distribution grids from concentrated data centre loads near urban centres.

Implications of data regulation for locating data centres across borders, including potential EU-wide frameworks.

Opportunities to utilise data centre waste heat in district heating networks, which should be considered when planning locations.

Strategic siting of AI data centres offers policymakers a tangible lever to align the digital and energy transitions. Incentivising co-location with renewable energy generation can ease grid constraints, lowering system costs and accelerating decarbonisation.

Note: This commentary presents the concept at a high level; a significantly more detailed analysis is required to quantify actual savings and broader impacts, including cost calculation methodologies and local context considerations. The authors would also like to thank Jens Gröger (Öko Institut) for the valuable exchange on this topic.

Dr. Samarth Kumar

Dr. Samarth Kumar